Today’s engineering teams need to deliver products faster than ever and still maintain high levels of security and reliability. However, many quality assurance (QA) methods for testing in the cloud continue to be dependent on the use of expensive Software-as-a-Service (SaaS) testing environments that charge by the minute, by the test session, and/or by each parallel browser instance.

As release cycles become shorter, these costs quickly add up, creating a contradiction: the faster you can use your Continuous Integration/Continuous Delivery (CI/CD) pipeline to create accurate and dependable tests, the bigger the testing bill.

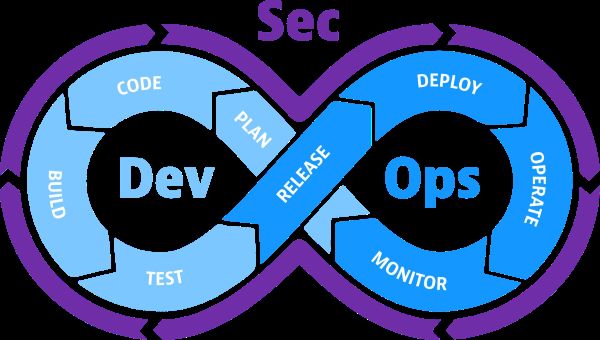

A new model is emerging. Instead of outsourcing execution to proprietary platforms, teams are embedding open source cross browser testing tools directly into their infrastructure. By leveraging Kubernetes clusters or Docker-based environments, organizations can build self-hosted, infinitely scalable testing architectures that align with DevSecOps principles. This shift is not just technical—it’s economic and strategic.

From SaaS Testing Clouds to Self-Hosted Control

Commercial testing clouds solved an early problem: access to multiple browsers and operating systems without maintaining physical labs. However, the subscription model introduced a dependency on external infrastructure. Scaling meant increasing monthly invoices. Parallelization, which should accelerate innovation, became something to ration.

Open source tools change the equation. When Selenium Grid, Playwright, or similar frameworks are deployed inside a Kubernetes cluster, scaling becomes a matter of allocating additional pods. Instead of paying per minute, organizations pay for compute resources they already manage. If the cluster scales automatically based on load, so does test execution.

Infrastructure-as-Code as the Foundation

At the core of this transformation is Infrastructure-as-Code (IaC). Tools like Terraform and Helm charts enable QA environments to be provisioned the same way as production services. Browser containers can be instantiated dynamically, isolated for each pipeline run, and destroyed afterward.

This ephemeral model aligns with zero-trust security practices. Instead of long-lived shared testing environments, each job spins up a clean, disposable stack. Vulnerabilities do not persist. Misconfigurations are eliminated in the next commit.

The economic impact is equally significant. In traditional SaaS testing clouds, teams pay for idle capacity. In a Kubernetes-driven architecture, auto-scaling groups respond to demand in real time. When pipelines are quiet overnight, resource usage drops. During peak release cycles, capacity expands without renegotiating contracts.

CI/CD Integration Without Friction

One of the strongest arguments for open source cross browser testing tools is seamless CI/CD integration. Because they run within your environment, they connect directly to internal services, staging databases, and private APIs without exposing endpoints to third parties.

GitHub Actions, GitLab CI, or Jenkins pipelines can trigger test jobs that spin up browser containers within the same network boundary. Artifacts such as logs, screenshots, and videos are stored in internal object storage rather than external dashboards.

This is where DevSecOps maturity becomes visible. Security scanning, static analysis, and dynamic testing can operate side by side. Compliance checks are embedded into pull requests. Audit trails remain centralized.

Interestingly, the same principles apply in industries where user experience must be validated under regulatory scrutiny. Online gaming platforms, for example, rely heavily on automated UI testing to ensure fairness, transparency, and correct rendering across browsers. When teams build replay systems that showcase casino game sessions or highlight significant wins, they must verify visual consistency and transactional integrity across environments.

As replay features become more common—allowing users to revisit major wins or inspect the sequence of events that led to them—QA pipelines must validate animation timing, payout calculations, and cross-device responsiveness.

In one analysis of replay implementations, Vladyslav Lazurchenko underlines this by referencing a list of new Michigan online casinos published by Jackpot Sounds as an example of how regulated platforms publicly surface structured data about operators while maintaining strict compliance under the Michigan Gaming Control Board. That ecosystem includes technology providers such as Bragg, whose integrations demand predictable cross-browser behavior, and even payment flows involving Apple Pay, all of which must be tested consistently within secure pipelines.

This is not about promotion; it is about architectural rigor. Whether validating a Kubernetes dashboard or a gaming replay interface, deterministic testing in controlled infrastructure is essential.

Infinite Scaling Without Per-Minute Billing

The phrase “infinite scaling” is often marketing shorthand, but in containerized QA environments, it becomes practical. Horizontal Pod Autoscalers monitor CPU and memory usage. When the load increases, new browser instances spawn automatically. When demand drops, they disappear.

There is no per-minute surcharge. No concurrency ceiling imposed by subscription tiers. Teams decide how many nodes they want to allocate based on performance targets and budget constraints.

Cost optimization becomes measurable. Finance departments can forecast expenses based on infrastructure utilization rather than opaque SaaS metrics. Engineering leaders can experiment with higher levels of parallelization to reduce pipeline duration without worrying about runaway invoices.

Moreover, cloud-native observability tools provide granular metrics. You can track how long each test suite runs, how much memory each browser consumes, and where bottlenecks occur. Optimization becomes data-driven rather than contract-driven.

Security Advantages in DevSecOps Context

Security in DevSecOps is not an afterthought; it is embedded from design to deployment. When QA infrastructure is self-hosted, organizations retain control over data flow. Test artifacts, credentials, and internal URLs never leave the perimeter.

Open source cross-browser testing tools can be hardened according to internal policies. Role-based access control limits who can trigger large-scale test runs. Network policies isolate browser pods from sensitive services.

Secrets are injected securely via vault integrations.

Organizational Impact and Developer Experience

Beyond infrastructure and cost, the shift influences culture. Developers who treat QA as code become more invested in test reliability. When they can inspect container logs directly, reproduce failures locally with Docker Compose, and adjust resource allocations via configuration files, feedback loops shorten.

The barrier between development and testing dissolves. Teams iterate on test architecture the same way they iterate on application features. Pull requests modify both code and environment definitions. Rollbacks apply equally to infrastructure changes.

This autonomy also accelerates onboarding. Instead of waiting for SaaS credentials and learning proprietary dashboards, new engineers interact with familiar tools:

- kubectl,

- Docker CLI,

- YAML manifests.

Knowledge compounds internally rather than depending on vendor documentation.

The Future Trajectory

The trajectory is clear. As organizations mature in cloud-native practices, externalized testing clouds look increasingly like legacy constraints. They solved yesterday’s bottlenecks but introduce today’s inefficiencies.

Open source cross-browser testing tools integrated into Kubernetes or Docker environments represent a natural evolution. They align with Infrastructure-as-Code principles, reduce variable costs, and strengthen security postures. Most importantly, they restore ownership.

DevSecOps is ultimately about alignment—aligning speed with safety, innovation with accountability, automation with governance. QA strategy cannot remain isolated from that philosophy. When testing infrastructure scales as fluidly as application workloads, teams stop negotiating with their tooling and start optimizing their systems.

The future of QA is not defined by per-minute billing dashboards. It is defined by declarative configurations, elastic clusters, and pipelines that scale without friction. Organizations willing to internalize this model will not only reduce expenses but also gain a structural advantage in reliability, compliance, and developer velocity.

In that sense, scaling your QA strategy is less about tools and more about architecture. And architecture, when expressed as code, becomes the most powerful testing framework of all.