How much are software testing and software quality assurance important in today’s software development world. This article explores this topic and how organizations could get the most out of software testing.

Author: Peter Vogel

We can’t get the most out of testing if we don’t understand the underlying realities.

The chief reality is that we constantly prioritize our activities, including testing. If I’m building a web application to be used by my company’s internal staff, I have a different approach to web design and aesthetics than I do when testing an application that’s going to be used by my company’s customers. With the internal application, I’m going to make sure it works and is usable, but I’m not going to worry nearly as much about how the application looks as I will with the external application.

That’s not because I don’t care about what sort of ugly pages I inflict on my fellow employees, but if these pages are part of my company’s staff’s job, they probably don’t care how they look. In fact, they’d probably prefer that the pages looked as much like every other ugly page that I’ve created and they have to work with as possible the three things users like best: consistency, consistency, consistency.

However, if I’m worried that my company’s customers might go somewhere else (and I am), I’m going to spend a lot of time making sure that the external application looks like a site users will want to spend time on.

And, if we’re going to be real/honest about this, we make the same kinds of decisions when it comes to software testing.

The Reality Is That It Isn’t ‘Quality Assurance’

In reality, our current process shouldn’t even be called “Quality Assurance.” Calling testing/bug fixing “Quality Assurance” seems to suggest that people have conversations like this:

Person 1: I just bought this piece of software and not only does it not do anything useful, it takes forever to do it and is hard to use.

Person 2: Any bugs?

Person 1: Well … no.

Person 2: Well, that’s a quality piece of software then, isn’t it?

Removing defects isn’t about creating “quality”—removing all defects is about achieving the minimum level of acceptance (and don’t get me wrong—that’s something worth doing). But rather than calling the process “quality assurance,” a better name would be “risk management.” Let me explain why.

The Limitations of Reality

The reality of testing is that there’s never going to be enough time/people/money to produce bug-free software.

Aspirations matter here: We could deliver bug-free applications if we just wrote simpler applications. But we’re not going to do that. The SOLID principles and microservice architectures are, in fact, an attempt to build complex applications out of simple parts. The problem is that we use them to build complex applications: we haven’t so much solved the problem as moved it around.

With both simple and complex applications, it’s very easy to find bugs in the early stages of application development. But, in a complex application, as we address each of those bugs it takes longer and longer to find the “next bug.” Eventually, with what resources we have available to us, the time to find the “next bug” is so great that finding it would mean never releasing the software.

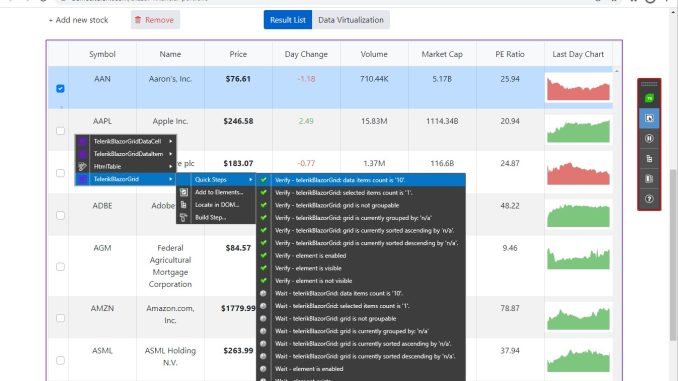

Automated testing tools, (like Telerik Test Studio), help here by tremendously improving our ability to run every regression test we can automate. Automated testing also helps during exploratory testing by letting us create an automated test that tracks our progress with fixing the bugs we find (and the test then becomes part of our regression testing to make sure the bug never comes back). There’s more: Coupled with an effective reporting system, automated testing both reduces the time to determine the cause of a bug and helps keep management informed on how the software is progressing toward release. See this in action in this blog post.

Caption: Automated testing with an effective reporting system

But automated testing can’t help us find the “next bug.” For that, we need creative, experienced testers who can prioritize the tests they perform to find “the bugs that matter.” However, it’s only a matter of time until some bug isn’t discovered and costs your company real money `cough`Pentium bug`cough` … or substitute the horror story of your choice).

So, as I said, what we’re doing is managing risk. Thinking of testing as risk management gives you a better basis for deciding how to spend your time: You’re not going to perform a test that doesn’t reduce your risk or where you can mitigate the risk at a low cost, especially if the cost of mitigating the risk is lower than the cost of finding the “next bug”.

The Reality of the Value of Testing

Then there’s the reality of running tests: it’s the only tool we trust for finding bugs. While other processes have been suggested, Design by Contract or provably correct software, we don’t believe in those tools in the way we believe in running tests. And it doesn’t matter if we’re talking about exploratory testing, regression testing, user acceptance testing or any other part of testing methodology: We believe in running tests.

That makes testing a necessary activity, not a value-added activity. You can prove this. Go to your users and ask them, “If we could produce bug-free software without testing, would you be unhappy?” I guarantee that not one of them will say, “But I’ll miss the testing sooooooo much!”

Every minute that we spend on necessary tasks is time taken away from value-added tasks. That means that you should spend as much time on testing as you have to … and not a minute more. And, like an investment, you should make every minute that you spend on testing gives a great return.

Caption: Visual test recorder – an important instrument for any automation QA

So we should automate where possible to take our most expensive resource (people) out of the process. We should shift testing to earlier in the development process wherever it lowers our testing costs. We should integrate the people who benefit from testing (e.g., end users) into the process so that they come to value it or, if we can’t get users to value testing, we can at least implicate them when a bug makes it to production.

Scheduling Reality

The final reality is that you can’t start testing early enough. Start with testing/validating those requirements to ensure that developers are both building and testing the right things. Test code early so that you know the code meets requirements and ensures that later code is built on and integrates with that code that works.

Start testing early, prioritize your tests to manage risk, and think of your testing time and money as an investment: Where do I spend the least amount to get the biggest return? If you do those things, you’ll end up with a reality that you (and your company) can live in.

About the Author

Peter Vogel is a system architect and principal in PH&V Information Services. PH&V provides full-stack consulting from UX design through object modeling to database design. Peter also writes courses and teaches for Learning Tree International.